Sun Apr 26 2020

Making FastComments Realtime

One great part of trying to sell a product is that you get a lot of great, free ideas. Sometimes that person even decides to be your customer - after you've bugged them - and your product wasn't good enough.

I contacted one company that basically sold pub-sub solutions, who was using a competitor's comment system on their site. Trying to sell them on FastComments they politely said no, however they suggested it'd be cool if FastComments was real time and that they'd give me a great discount on their pub-sub service.

Sales tactics aside, I looked at my TODO list. I had some things I really wanted to do, but I couldn't stop thinking about making FastComments real time. It bugged me in the shower, and while I was working on other features. So I pulled the plug and over the weekend - FastComments is now real time!

Did I use the potential customer's pub-sub service? No. It was far too expensive. Running an Nchan/Nginx cluster is a very easy, secure, and scalable way to handle fan out. I had experienced doing it with Watch.ly. Nchan can handle way more connections and throughput than most applications will ever see. So, Nchan will handle our Websocket connections.

I decided on a pub-sub approach - there would be a publicly exposed /subscribe endpoint that your browser connects to. It cannot send messages over this connection - it can only receive. Then, there's a /publish endpoint that only listens on a local ip that the backend instances have access to.

Scaling Nchan

Scaling Nchan is relatively easy - so nothing very interesting here. You deploy more instances, add a Redis cluster, and then do the things required for your OS to make it accept more connections than default.

Deploying Nchan

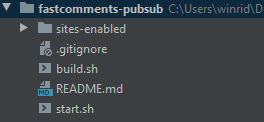

Deployment takes about two seconds using Orcha, the build/deployment software I've been working on. For example, here's the complete file structure for defining a deployment of Nchan right now. I just push a change to the repo and done - the cluster updates with the latest configuration. Similar to terraform or ansible.

Orcha will be open sourced at some point.

Architecture and Problems

The following problems had to be solved/decisions to be made:

- When to push new comment and vote events.

- What the UI should do when a new comment or vote has been received.

- How to re-render part of the widget safely to update the "Show New X Comments" button.

- How to do this without increasing the client side script size substantially.

When to push new comment and vote events.

This might sound like an easy question, but the problem is that when an event is pushed we need to know who to fire the event to. We solve that by using channels - the ID representing a URL (basically a clean version of the URL) - is what the client listens on for new messages.

The backend says, here's a new comment for this URL ID and Nchan forwards the message to all the respective clients.

So here's our first problem. The person that sent the comment is also on that page, so they'll get the event. How do you efficiently do a de-dupe in the UI to prevent that extra comment from showing? We can't do it server-side. Our fan-out mechanism should remain very simple and efficient, and Nginx/Nchan does not support this behavior. It also couldn't, since it doesn't know the event originated from the user. From Nchan's perspective, it originates from our servers.

Obviously we need to do a check - see if that comment is already in memory on the client. The approach I went with was to create a broadcast id on the client, pass that along with the request, and then that id is included in the event sent to each client. If the broadcast id in the client's list of broadcast ids, we know we can ignore that event.

This may seem like an extra step. Why not just not render the first comment and let the event from the pubsub system cause the comment to be rendered? The first reason is that this may cause some lag which does not feel satisfying. The other reason is that the event will not be broadcast if the comment had been marked as spam in realtime, for example. However, you'll still want to show the user the comment they just posted. So we can't rely on the message API for my own feedback when posting a comment - you're asking for trouble.

We do the same things for vote events to prevent a single click causing the number from jumping twice locally.

So to recap this approach does two things.

- Prevent race conditions.

- Ensure the correct people see the correct comments and vote changes in real time.

What the UI should do when a new comment or vote has been received.

First all, we definitely don't want to be rendering new comments as they are posted. This would be distracting from the content someone may be trying to read or interact with. So what we need to do is render little buttons like "5 New Comments - Click to View" in the right places.

What we want to do is show a count on the first visible parent comment like:

"8 New Comments - Click to show"

This means that if you could have:

Visible Comment (2 new comments, click to show)

- Hidden New Comment

- Hidden New CommentSo we need to walk the tree to find the first visible parent comment and then calculate how many hidden comments are under that parent.

We also need to insert the new comment at the right point in the tree, and mark it as hidden for now. This way if something triggers a full re-render of the tree the comment is not rendered.

If the received comment doesn't have a parent - like when replying to a page instead of a parent comment - we can just treat the page as the root and don't have to walk any tree.

We make an assumption: We should always receive the events in order so that we can just walk the tree backwards.

Then we just need to render that text/button. Clicking it then goes and changes the hidden flag on said comments and re-renders that part of the tree.

Here it is in action! It's pretty satisfying to watch and use.

How to do this without increasing the client side script size substantially?

This is always the fun part of FastComments - optimization. In this case the story is really boring. It just wasn't much code. The logic is pretty straight forward. There's some simple graph/tree traversal but that is pretty compact since our rendering mechanism had been kept simple.

I'm very happy with how things turned out - let me know what questions you have, and I'll happily update this article. Cheers.

Update 2022

FastComments now no longer uses Nginx with Nchan. We found it to have stability problems that the community and original author were not able to figure out. It would randomly stop responding to publish events and hang.

FastComments now uses its own custom PubSub system in Java built on top of Vertx. This has also allowed us to build some really cool features that would have otherwise been too expensive, as they would have required us to store extra state in a database.